DynaSat is developing a dynamic satellite network management system that supports adaptive, fault-tolerant, and secure communications for DARPA’s F6 distributed satellite architecture. It leverages ISI’s X-Bone virtual network system and NetStation distributed PC architecture system, integrated on flight-capable hardware to deliver a space-based Internet for distributed satellite data collection applications.

Network Architecture

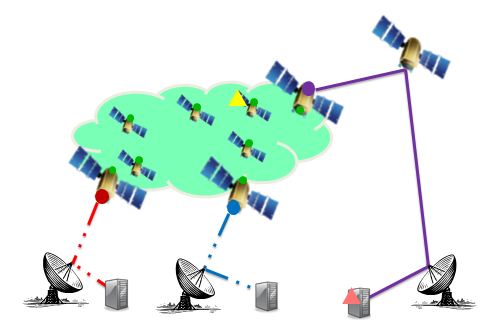

Initial

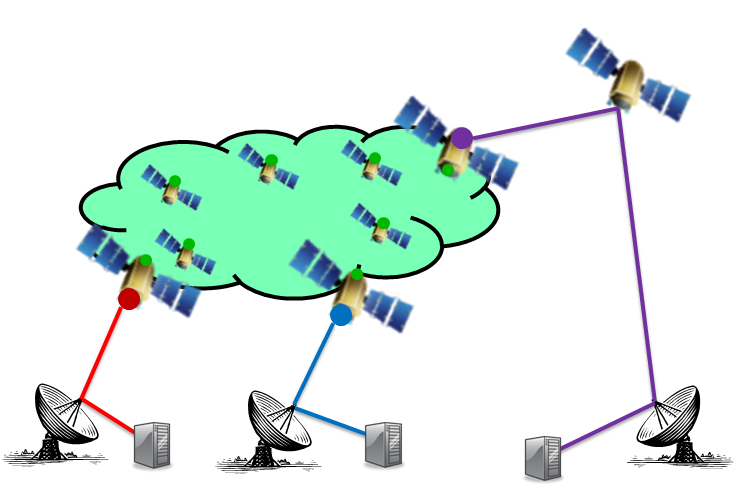

F6 modules (satellites with green dots) are part of a single L2 subnet, configured by TA2 components. This includes modules for persistent downlinks (also has a purple dot) or intermittent direct downlinks (red and blue dots).

Each F6 module also autoconfigures an L3 address, determined from the persistent (purple) downlink gateway router. Further configuration is performed via DHCPv6 relayed through that gateway (purple dot).

Some F6 modules or ground modules also serve as Overlay Managers (triangles).

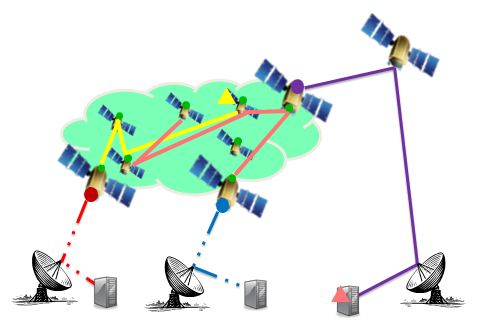

Layer 3 overlay

Overlays are managed by Overlay Managers (triangles), either in space (yellow) or on the ground (pink).

An overlay connects components in space via tunnels (yellow links; pink links). Some modules can be part of more than one overlay at the same time.

Interfaces

DynaSat includes several graphical interfaces:

- Node configuration GUI – an X11 front-end for node certificate and access management configuration

- User configuration GUI – an X11 front-end for user certificate and access control configuration

- Overlay management GUI – a web front-end to the overlay management API

The underlying distributed software system is based on two APIs:

- Overlay managment API – an XML user programmatic interface for managing overlays

- This includes the X-Bone Overlay Language (XOL), an XML structure for describing overlays

- Overlay control API – a custom language for configuring and monitoring individual components

Installation

Add node

Install the X-Bone software

Login as root, then do the following (this documents the current development environment; the final package will install in a single integrated action):

- cd /usr/local

- svn co svn://mul.isi.edu/root/SVN/XBone-3.3

- /usr/local/XBone-3.3/trunk/install-me.sh — which does the following:

- cd /usr/local/XBone-3.3/trunk

- cp XBone-3.3-InstallXBone.sh XBone-3.3-InstallPERL.sh /user/local

- cd /usr/local

- XBone-3.3-InstallXBone.sh

- XBone-3.3-InstallPERL.sh

Configure the node

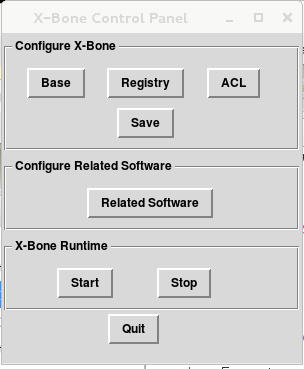

Run /usr/local/bin/xb-node-control:

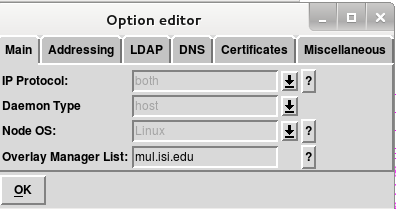

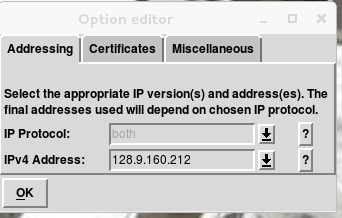

- Set the MAIN options – which protocols are supported, whether this is a host/router/both, the OS type (self-detected, but overridden for buddy hosts), and the valid OM list.

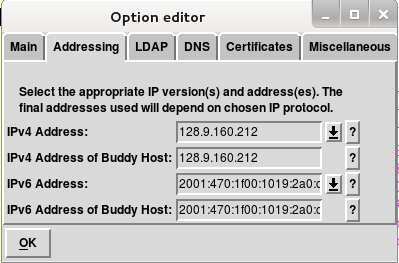

- Set the addresses (self-detected, overridden if desired or for buddy hosts).

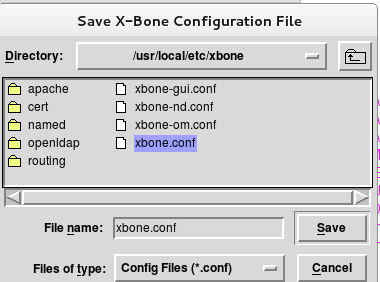

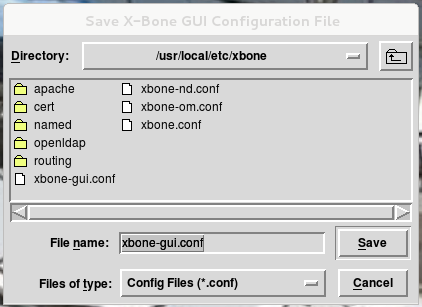

- Save the configuration for restart:

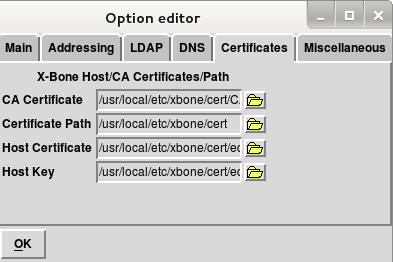

- Add the certificates (master CA and the local host cert).

- Start the system (click START):

Add user

Users are added by augmenting the database of certified users with their associated resource access permissions, as with any MILS system.

Shared infrastructure

Configure the shared infrastructure

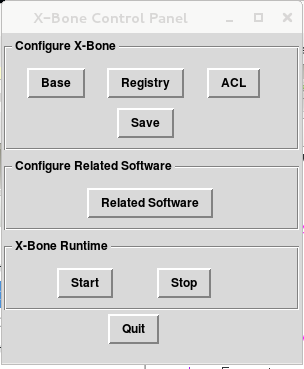

Overlay Manager

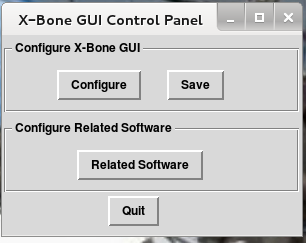

Run /usr/local/bin/xb-gui-control:

DNS server

Used for convenience for access to overlay nodes by name.

IP address server

Delegates address blocks; may not be needed for IPv6-only operation.

Mission deployment

Missions are deployed using a graphical user interface OR a command language API directly, and occur in two major phases:

- resource discovery

- component configuration

The overall protocol is shown below, as messages initiate at the graphical user interface (GUI – or directly from a programmatic API), and are sent to the Overlay Manager (OM), and subsequently to each of a number of Resource Demons (RDs).

The second phase itself is a two-phase commit protocol that supports recursion, shown below:

Operation

Resource Management

Resource Types

We group resources into the following categories:

- Network capacity

- Processor load

- Memory use

- Device sharing

- Service sharing (e.g., remote operations, such as long timescale file transfers, sequenced image processing, etc.)

Each resource may have the following properties:

- Exclusive vs. concurrent (e.g., to avoid potential covert channels)

- Preemption vs. non-preemptable

- Scheduled vs. non-scheduled

- Unique or pooled

- Specific per-user access controls or limitations

For each of these resources and properties, we try to use existing mechanisms for resource management where possible. Most conventional components are easily modeled as resources; cameras and sensors may be more challenging because their control and data channels need to be managed together. Much of our expectation for handling sensors is based on file-mapped devices, i.e., where the control channel and data channel are available as virtual files in the file system.

Sharing

We expect that a single component can be shared when it either concurrently or serially exists as an interface or file in different missions. For cameras, this means shared access to the virtual file descriptor of the control and data channel. Other sharing, as when packets on the interfaces of separate miissions share a crosslink or downlink interface, happen by an explicit resource sharing mechansm (for networking, this is AQM with RSVP).

Fault Tolerance

Striping support

Some striping can be supported at the application layer, e.g., using LFTP or Axel. Striping may also be supported at the network layer using DynaBone multipath overlays (which can also include FEC).

Intermittent link support

Intermittent file transfers are already supported by FTP, HTTP within a single file, and via rsync across multiple files. This is more than sufficient for the 1-3x/day 15-20 min duration link operation expected for LEO downlinks.

Fault tolerant files

There are a variety of distributed fault-tolerant file systems, of which we are currently exploring MooseFS. A key concern is the interaction between such a file system and the MLS protection mechanisms; it may be necessary to implement one such file system service for each MLS security domain separately.

Fault tolerant system management

Our system was designed to support warm-spare backups, using shared configuration files and heartbeats. Configuration files are checkpointed with each modification to the system, and can be used for reboot recovery. Distributed copies of the configuration file can be used for spares if a separate process is needed to recover function. That separate process listens for heartbeats from the primary (OM), and activates only when the heartbeats cease. Several layers of spares can be supported with appropriate timeout and activation configuration.

Ground Links

We assume that there are two types of ground links for an F6 cluster:

- at least one persistent low-BW channel via a geosynchronous relay (e.g., Inmarsat)

- 250-1000ms latency to the ground, at least 50Kbps

- optionally, one or more sporadic high-BW channels from certain F6 nodes within the cluster direct to ground

- 200ms or less latency to the ground, over 500Kbps

- connected approx. 2-3x/day for ~15-20 minutes, largely uninterrupted during that period

We expect that each such link is part of an IP router which is a member of the F6 cluster, i.e., a node with another interface on the F6 wireless network.

The result of these assumptions is that we assume a persistent control channel over which management commands, including liveness (heartbeats) operate. We also expect that the high-capacity direct downlinks can use conventional pause-resume protocols (FTP, HTTP) for data transfer, using existing TCP (which may be modified with a larger initial window, or high-speed variants).

Performance

Bandwidth

Our approach uses existing IPsec and IP headers, together with two layers of IP-in-IP tunnel.

For IPv6 packets, assuming L2 headers of approximately 18 bytes, TCP/IP headers of 60 bytes (lower for UDP traffic), the total minimum uncompressed original packet size is expected to be around 78 bytes, i.e., these 100-byte packets are really 178 bytes, i.e.,

App-app packets of 100 bytes will already have 78% additional overhead due to the L2/IP/TCP headers, i.e., only 56% efficiency

Our approach adds an IPsec header (52 bytes, which varies slightly depending on algorithm), and between 2 and 4 additional IP headers, i.e., an uncompressed additional 132-212 bytes, resulting in an overall packet size of 310-390 bytes.

100 bytes of user data -> 178 bytes as a network packet -> 310-390 bytes over DynaSat, i.e., 25-30% efficiency

The expected required L2 data capacity for 100 messages/sec of 100 bytes payload each is thus approximately 250-312 Kbps — to support a user payload capacity of 80 Kbps (i.e., around 25-30% efficiency). The overhead remains the same for larger packets, so the efficiency increases as payload size increases. Further, the overhead could decrease with minimal compression, e.g., even using IPv4 headers for the overlay system would result in no additional CPU effort for compression/expansion and no storage for conversion tables but the total bandwidth reduces to 216-280 Kbps and the efficiency increases by around 5%.

Further compression (e.g., ROHC) could achieve even higher efficiencies, compressing not only our headers but also the original TCP/IP headers.The number of intermediate nodes does not affect these numbers, and they are preliminary.

Efficiency vs. simple IPsec

Simple IPsec would add around 52 bytes (depending on algorithm) to a base packet, and typically operate in tunnel mode (adding another 40 bytes for IPv6), so the overall additional overhead for a simple secure network would be 92 bytes on top of 178, for a total of 270. DynaSat adds only 1-3 additional IPv6 headers, i.e., another 40-120 bytes, resulting in a relative efficiency of 70-85%.

Packet rate

Our approach does not modify the kernel OS packet handling mechanisms. Each packet traverses the kernel at least two times, however, so the maximum packet rate is reduced by a factor of around 2. At

Overlay creation

Overlays currently take around 12 seconds to create, with an additional 2 seconds when IPsec is included. Much of this time is consumed in configurable timeouts, e.g., waiting for all available nodes to respond to an overlay advertisement.

Code size

The current base code is 31,000 lines of Perl, of which 14,000 are part of custom recursive-descent parsers (much of which disappears when the language is fully XML). Tihs code currently supports 3 different operating systems – FreeBSD, Linux, and Cisco (via a buddy host).

The current script activation and control interface’s memory footprint is 22.9 MB, and the current node daemon’s memory footprint is 63.4MB, for a total of 86.3MB. Much of this size is due to large Perl libraries that are included, even though only small portions are actually used.

Security

Network security

Network security utilizes existing Internet IP security (IPsec) protocol extensions, which supports both IPv4 and IPv6 operation.

MLS and overlays

The X-Bone assumes an achitecture with one Resource Deamon per physical node, and one primary Overlay Manager per overlay. This means that a given overlay has only one primary OM, and that an RD may be configured by multiple OMs at the same time.

DynaSat addresses MLS by limiting the scope of an OM. Each OM must reside in ONLY ONE security domain, which means that a single overlay similarly exists in ONLY ONE security domainas well. Missions requiring communication between multiple security domain thus require multiple overlays, where the overlays meet on at least one common node where a guard application resides.

Our assumptions model overlays as if they were files, where a single file resides in only one security domain, and cross-domain information sharing occurs only through guard applications.

Host security

Host security is provided using a MILS hypervisor with separate guest OS images, in which different images support different security levels. Information is kept separate between these using network paths managed by the DynaSat configuration system.

Covert channel analysis

Brief Outline of DYNASAT Operation

User processes with X509 certificates issue requests to Overlay Managers (OMs) to define an overlay, and deploy applications. OM processes and Resource Daemon (RD) processes exchange control-plane protocol messages to execute those user requests.

To achieve needed MLS security objectives DYNASAT adopts the following conventions:

- An OM process resides exclusively in the security level of the overlay that it creates. Necessarily, if the are N security levels, there must be at least N sets of OMs.

- RD processes manage host resources. They reside in one or more security levels and only carry out resource management functions.

- User processes, properly authenticated and privileged issue requests to OMs to create overlays at particular security levels.

- Where write down is needed, the user process is responsible for creating a guard (gateway) process that resides in multiple overlays.

When OM and RD processes are instantiated and could perform write-down operations or instantiate a guard process to perform that, they are configured with membership in particular security levels.

Because an RD manages resources for a host, it normally participates in the multiple security levels defined for its host. RDs therefore can potentially act as guards. As stated above, they are restricted to host resource management functions and do NOT send or receive data-plane packets.

To create an overlay, DYNASAT modifies the following virtual machine state:

- interface table

- routing table

- firewall rules (potentially)

- QOS rules

- IPsec table (keys and security associations)

- DNS resolver configuration

DYNASAT configures name resolution in overlays such that locally defined name associations are not accessible outside the overlay.

DYNASAT creates overlay networks (VPNs) for each distributed application. These overlays are described and defined via DYNASAT configuration and control protocols that are based upon existing XBONE protocols. Most DYNASAT overlay resides exclusively within one security level (compartment). Once an overlay is established, payload data sent across it is protected by IPsec encryption (at this time Suite B encryption is assumed). Traffic may only move to a lower security level by means of a trusted guard process that is simultaneously a member of (resides on) both the higher and lower security level overlays.

Discussion of DYNASAT Covert Channels

The TCSEC definition for a covert channel is a means to circumvent a properly configured and functioning security system by the ability to transfer information from a higher compartment of security to one of lower security. Covert channels are distinct from network steganography techniques.

Mandatory access controls are presumed to be properly configured and functional. Covert channels that utilize information flow via memory and register state across virtual machines are presumed to be limited by a seperation kernel that is properly configured and functional.

DYNASAT relies upon standard techniques that are presumed implemented by the physical and data link layer provider. This includes the COMSEC features: TRANSEC and traffic-flow security. The presence of valid messages on F6 communications links is assumed to be obscured by whatever encryption technology is employed. Packets are therefore considered opaque below the network layer.

Timing channels are difficult to initiate in a distributed system if a synchronized clock is not available. DYNASAT does not utilize a synchronized clock. A covert timing channel must pass its information across levels within a host. Within the confines of real-time scheduling demands, it is assumed that scheduling jitter is used by the seperation kernel to further complicate the operation of a timing channel.

What remains to be considered are other potential cross-level information flows. The potential for creation of covert channels by DYNASAT relate to patterns of execution and state storage caused by overlay creation, overlay monitoring or overlay destruction.

There are three basic entities in the DYNASAT system, users, OMs and RDs. As stated above, these all reside in the same security level. The creation and destruction of overlays modifies the following virtual-machine system state: interface tables, routing tables and IPsec key association tables. As stated earlier, the interface, routing and IPsec tables are local to the virtual machine.

Tech. Area Interactions

Design tools (TA1)

Choosing configurations for an F6 element among a blizzard of alternatives requires a lot of design parameters. TA1 is going to need a great deal of design alternatives data from TA3 eventually. From TA3 those would be items such as motherboard configuration parameters, imposed application limitations, chassis requirements and so forth.

It seems to be a good idea to start this ball rolling now, so that TA1 can take a peek at some of the objects and properties they may need to deal with.

Along those lines we summarize one motherboard and chassis configuration. These are drawn from publicly accessible documents. No doubt some of this is incorrect. Also, the categorization that we suggest below via names and indent levels is merely a ‘for instance’ and to be taken as indication only. The interface description may need further augmentation.

Quite obviously, there will be other meaningful bounds, such as OS memory consumption, available memory for applications and application memory requirements. Those are currently unknown.

Motherboard:

Manufacturer: Space Micro Corp.

Model: Proton400K-L

FormFactor: PCI-104s

Processor:

CPUType: PowerPC

Model: P2020

L2Cache: 512 KBytes

WordSize: 32 bits

Cores: 2

Core: e500

Speed: 1.2 GHz

MIPS: 2.5 GMIPS

DCache: 32 KByte

ICache: 32 KByte

FPU: 1

FPUSize: 32 bit

EventRecovery: voting, 3-way

MaxPower: 12.7 W

MinPower: 8 W

28vPowerConverter: No

MaxDynamicMemory: 512 MBytes

MinDynamicMemory: 128 MBytes

Interfaces: IEEE 1394, cPCI, RS-422

OperatingSystems: VxWorks, Linux

Radiation:

SEU: 1E-4/day

LatchupImmunity: 70 LET

DoseTolerance: 100 krad

FlashType: Radiation hardened NAND

MinFlash: 4 GBit

MAxFlash: 8 GBit

FormFactor: PCI-104s

Size: 90 mm x 127 mm x 18 mm

Weight: 150 g

Chassis:

Manufacturer: Space Micro Corp.

FormFactor: PCI-104s

SizePCI-104s: 102mm x 155 mm x 18 mm

MinWeight: 300 g

PerSliceWeight: 115 g

28vPowerConverter:

Manufacturer: Space Micro Corp.

FormFactor: PCI-104s

Output+12v: 10 W

Output-12v: 10 W

Output+5v: 15 W

Output+3.3v: 10 W

EMIFilter: MIL-STD-461C, MIL-STD-461D

VoltageRegulation: 0.02

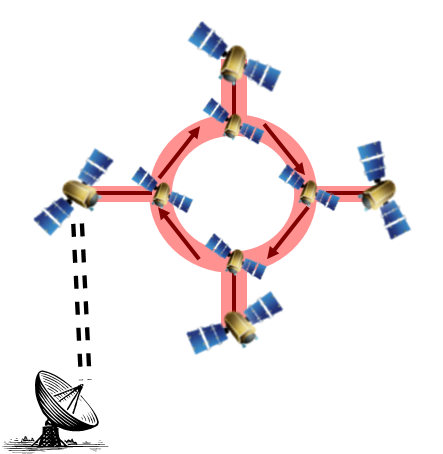

Layer 2 interface (TA2)

We assume a Layer 2 that provides similar capabilities to a conventional ethernet LAN:

This image shows the F6 cluster components as each having a single TA2 interface (green), where the green subnet is the single LAN-like F6 subnet.

In specific:

- global static endpoint addresses

- full edge-to-edge reachability (i.e., multihop within the entire LAN)

- multicast/broadcast

- Subnet Bandwidth Manager (SBM) for QoS (RFC 2814)

The figure also shows at least one node has a link relayed through a geosynchronous satellite (shown in purple, i.e., Inmarsat), and there can also be nodes in the cluster that have direct ground links (shown in red and blue). The geosyn link is always up, and the ground links are up approximately 20 minutes at a time, 2-3x per day.

We anticipate needing SBM support to enable either explicit (RSVP) or implicit (bandwidth manager) QoS support at L3.

Note that most of these requirements are consistent with basic assumptions of efficient L2 support for Internet L3 (RFC 3819).

The result treats the entire F6 cluster as a single Internet subnet. Solutions for intra-subnet forwarding should be addressed at L2, as is common throughout the Internet, to avoid L2/L3 forwarding interactions. This is important because L3 assumes that a link is either point-to-point or shared, and its routing protocols are not efficient when these are mixed within a subnet (or when a link has varying properties).

Cluster flight (TA3)

We currently assume that cluster flight has modest requirements and impact on the overall network architecture as follows:

- Online: ~400-1,000 bytes of state every ~10 minutes, to be shared with all other nodes

- Offline: no communication (0 bytes), with a duration of ~20-30 minutes

- Advance notice: ~5 minutes per offline event; 0 notice to returning online

We anticipate that the cluster flight software will run as follows:

- soft-realtime operation (i.e., needs to complete in a reasonable time, but no hard timing requirements)

- hard-realtime clocks (i.e., needs to know exact time to determine location relative to other nodes)

- advisory-only operation (i.e., sends ‘suggested’ events to a separate on-board flight computer)

In summary, we do not anticipate the need for F6 to run hard realtime flight operations in the shared user multiprocessing environment; instead, we expect it to function as an advisor to a separate on-board hard-realtime flight controller via a protected internal channel. The communications requirements for cluster flight appear to be supported sufficienty by any common data broadcast mechanism, e.g., RSS.

People

Joe Touch – PI

Greg Finn, Goran Scuric, Rick Shiffman – staff

Michael Elkins – subcontractor staff

Publications

J. Touch, G. Finn, G. Scuric, M. Elkins, R. Shiffman, “The DynaSat Information Architecture for Fractionated Satellite Systems,” Proc. AIAA SPACE 2012 Conf., Sept. 2012, DOI: 10.2514/6.2012-5276

Related Projects

Networking and Overlays

X-Bone

The X-Bone is an Internet overlay system that includes both:

- an IP-based overlay architecture

- supports revisitation

- supports recursion

- supports integrated use of per-hop IPsec and dynamic routing

- based on a complete Recursive Network Architecture

- a distributed management system that deploys and monitors overlays

- configuration basedon nested transactions with two-phase commit and rollback recovery

- heartbeat-based monitoring with automatic state cleanup

- local control over resource discovery and allocation

- secure command and control based on S/MIME UDP and SSL TCP exchanges

- integrated support for application deployment and management

- integrated support for address allocation

- integrated support for DNS names

- a documented user API, including a separate language for describing deployed overlays

DynaBone

DynaBone is a two-level X-Bone overlay system that provides spread-spectrum-style defense for Internet traffic.

Tethernet

Tethernet is an Internet subnet rental system based on X-Bone that removes the effect of NATs, and thus allows full Internet access even where it is not locally supported.

Computer Architecture

NetStation

NetStation is a distributed computer architecture based on replacing the backplane of a computer with a high-speed network.

ATOMIC-2

ATOMIC-2 investigated the impact of deploying a near-gigabit (640 Mbps) network as the production environment fo the entire ISI Computer Networks Division.